14.1 Generative Adversarial Networks

import os

import numpy as np

np.random.seed(123)

print("NumPy:{}".format(np.__version__))

import pandas as pd

print("Pandas:{}".format(pd.__version__))

import matplotlib as mpl

import matplotlib.pyplot as plt

from matplotlib.pylab import rcParams

rcParams['figure.figsize']=15,10

print("Matplotlib:{}".format(mpl.__version__))

import tensorflow as tf

tf.set_random_seed(123)

print("TensorFlow:{}".format(tf.__version__))

import keras

print("Keras:{}".format(keras.__version__))

NumPy:1.13.1

Pandas:0.21.0

Matplotlib:2.1.0

TensorFlow:1.4.0

Using TensorFlow backend.

Keras:2.0.9

DATASETSLIB_HOME = '../datasetslib'

import sys

if not DATASETSLIB_HOME in sys.path:

sys.path.append(DATASETSLIB_HOME)

%reload_ext autoreload

%autoreload 2

import datasetslib

from datasetslib import util as dsu

datasetslib.datasets_root = os.path.join(os.path.expanduser('~'),'datasets')

Get the MNIST data

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets(os.path.join(datasetslib.datasets_root,'mnist'), one_hot=False)

x_train = mnist.train.images

x_test = mnist.test.images

y_train = mnist.train.labels

y_test = mnist.test.labels

pixel_size = 28

def norm(x):

return (x-0.5)/0.5

Extracting /home/armando/datasets/mnist/train-images-idx3-ubyte.gz

Extracting /home/armando/datasets/mnist/train-labels-idx1-ubyte.gz

Extracting /home/armando/datasets/mnist/t10k-images-idx3-ubyte.gz

Extracting /home/armando/datasets/mnist/t10k-labels-idx1-ubyte.gz

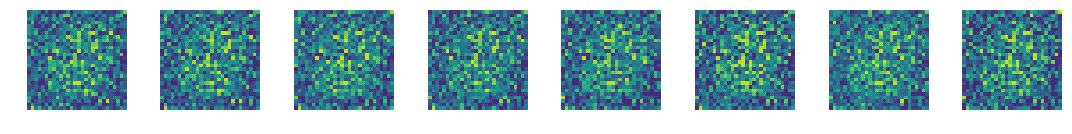

n_z = 256

z_test = np.random.uniform(-1.0,1.0,size=[8,n_z])

def display_images(images):

for i in range(images.shape[0]):

plt.subplot(1, 8, i + 1)

plt.imshow(images[i])

plt.axis('off')

plt.tight_layout()

plt.show()

Simple GAN in TensorFlow

tf.reset_default_graph()

keras.backend.clear_session()

g_learning_rate = 0.00001

d_learning_rate = 0.01

n_x = 784

g_n_layers = 3

d_n_layers = 1

g_n_neurons = [256, 512, 1024]

d_n_neurons = [256]

d_params = {}

g_params = {}

activation = tf.nn.leaky_relu

w_initializer = tf.glorot_uniform_initializer

b_initializer = tf.zeros_initializer

z_p = tf.placeholder(dtype=tf.float32, name='z_p', shape=[None, n_z])

layer = z_p

with tf.variable_scope('g'):

for i in range(0, g_n_layers):

w_name = 'w_{0:04d}'.format(i)

g_params[w_name] = tf.get_variable(

name=w_name,

shape=[n_z if i == 0 else g_n_neurons[i - 1], g_n_neurons[i]],

initializer=w_initializer())

b_name = 'b_{0:04d}'.format(i)

g_params[b_name] = tf.get_variable(

name=b_name, shape=[g_n_neurons[i]], initializer=b_initializer())

layer = activation(

tf.matmul(layer, g_params[w_name]) + g_params[b_name])

i = g_n_layers

w_name = 'w_{0:04d}'.format(i)

g_params[w_name] = tf.get_variable(

name=w_name,

shape=[g_n_neurons[i - 1], n_x],

initializer=w_initializer())

b_name = 'b_{0:04d}'.format(i)

g_params[b_name] = tf.get_variable(

name=b_name, shape=[n_x], initializer=b_initializer())

g_logit = tf.matmul(layer, g_params[w_name]) + g_params[b_name]

g_model = tf.nn.tanh(g_logit)

with tf.variable_scope('d'):

for i in range(0, d_n_layers):

w_name = 'w_{0:04d}'.format(i)

d_params[w_name] = tf.get_variable(

name=w_name,

shape=[n_x if i == 0 else d_n_neurons[i - 1], d_n_neurons[i]],

initializer=w_initializer())

b_name = 'b_{0:04d}'.format(i)

d_params[b_name] = tf.get_variable(

name=b_name, shape=[d_n_neurons[i]], initializer=b_initializer())

i = d_n_layers

w_name = 'w_{0:04d}'.format(i)

d_params[w_name] = tf.get_variable(

name=w_name, shape=[d_n_neurons[i - 1], 1], initializer=w_initializer())

b_name = 'b_{0:04d}'.format(i)

d_params[b_name] = tf.get_variable(

name=b_name, shape=[1], initializer=b_initializer())

x_p = tf.placeholder(dtype=tf.float32, name='x_p', shape=[None, n_x])

layer = x_p

with tf.variable_scope('d'):

for i in range(0, d_n_layers):

w_name = 'w_{0:04d}'.format(i)

b_name = 'b_{0:04d}'.format(i)

layer = activation(

tf.matmul(layer, d_params[w_name]) + d_params[b_name])

layer = tf.nn.dropout(layer,0.7)

i = d_n_layers

w_name = 'w_{0:04d}'.format(i)

b_name = 'b_{0:04d}'.format(i)

d_logit_real = tf.matmul(layer, d_params[w_name]) + d_params[b_name]

d_model_real = tf.nn.sigmoid(d_logit_real)

z = g_model

layer = z

with tf.variable_scope('d'):

for i in range(0, d_n_layers):

w_name = 'w_{0:04d}'.format(i)

b_name = 'b_{0:04d}'.format(i)

layer = activation(

tf.matmul(layer, d_params[w_name]) + d_params[b_name])

layer = tf.nn.dropout(layer,0.7)

i = d_n_layers

w_name = 'w_{0:04d}'.format(i)

b_name = 'b_{0:04d}'.format(i)

d_logit_fake = tf.matmul(layer, d_params[w_name]) + d_params[b_name]

d_model_fake = tf.nn.sigmoid(d_logit_fake)

g_loss = -tf.reduce_mean(tf.log(d_model_fake))

d_loss = -tf.reduce_mean(tf.log(d_model_real) + tf.log(1 - d_model_fake))

g_optimizer = tf.train.AdamOptimizer(g_learning_rate)

d_optimizer = tf.train.GradientDescentOptimizer(d_learning_rate)

g_train_op = g_optimizer.minimize(g_loss, var_list=list(g_params.values()))

d_train_op = d_optimizer.minimize(d_loss, var_list=list(d_params.values()))

n_epochs = 400

batch_size = 100

n_batches = int(mnist.train.num_examples / batch_size)

n_epochs_print = 50

with tf.Session() as tfs:

tfs.run(tf.global_variables_initializer())

for epoch in range(n_epochs+1):

epoch_d_loss = 0.0

epoch_g_loss = 0.0

for batch in range(n_batches):

x_batch, _ = mnist.train.next_batch(batch_size)

x_batch = norm(x_batch)

z_batch = np.random.uniform(-1.0,1.0,size=[batch_size,n_z])

feed_dict = {x_p: x_batch,z_p: z_batch}

_,batch_d_loss = tfs.run([d_train_op,d_loss], feed_dict=feed_dict)

z_batch = np.random.uniform(-1.0,1.0,size=[batch_size,n_z])

feed_dict={z_p: z_batch}

_,batch_g_loss = tfs.run([g_train_op,g_loss], feed_dict=feed_dict)

epoch_d_loss += batch_d_loss

epoch_g_loss += batch_g_loss

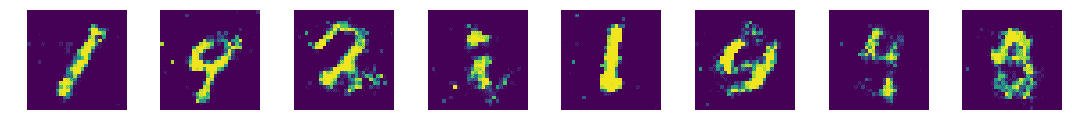

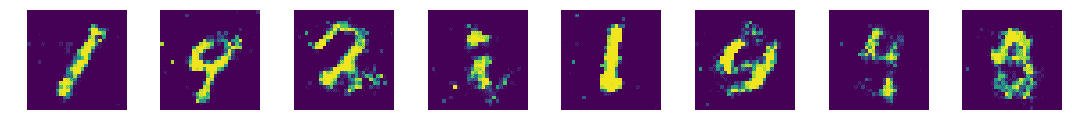

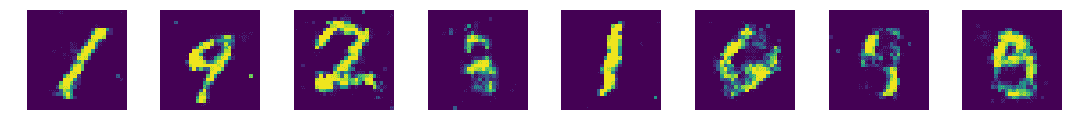

if epoch%n_epochs_print == 0:

average_d_loss = epoch_d_loss / n_batches

average_g_loss = epoch_g_loss / n_batches

print('epoch: {0:04d} d_loss = {1:0.6f} g_loss = {2:0.6f}'

.format(epoch,average_d_loss,average_g_loss))

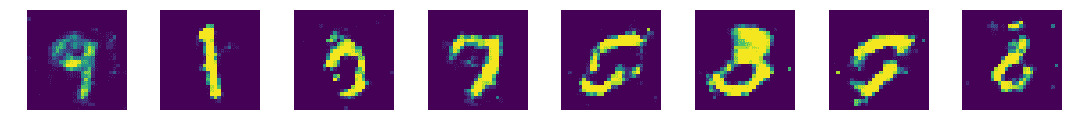

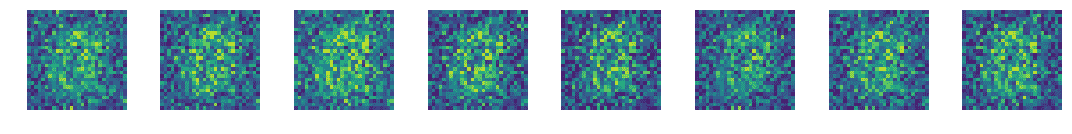

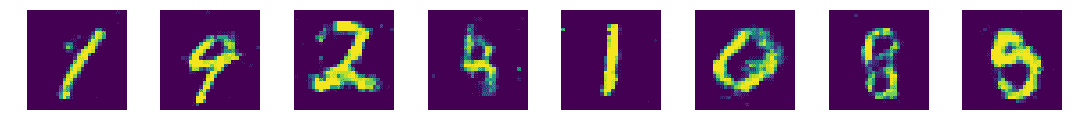

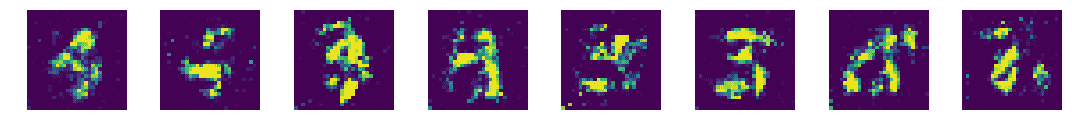

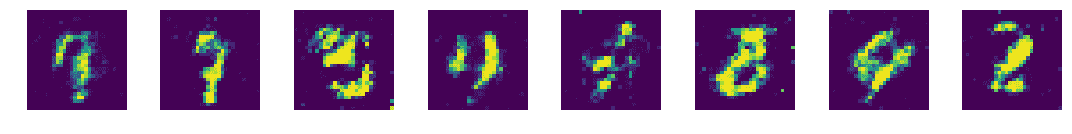

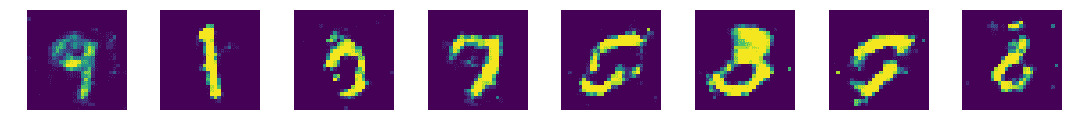

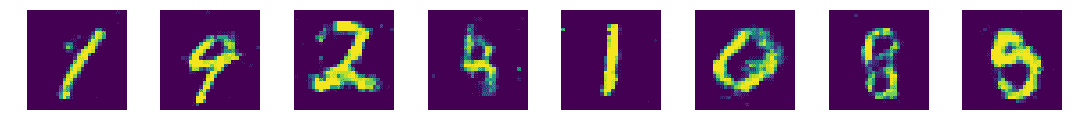

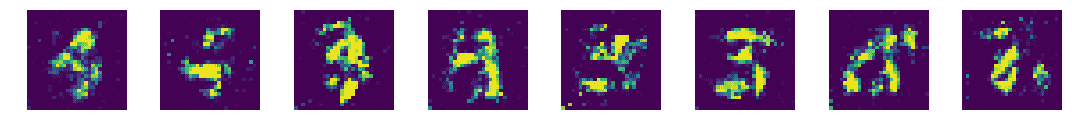

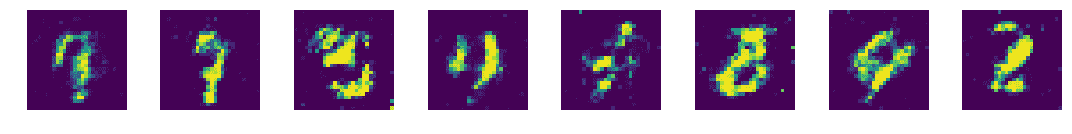

x_pred = tfs.run(g_model,feed_dict={z_p:z_test})

display_images(x_pred.reshape(-1,pixel_size,pixel_size))

epoch: 0000 d_loss = 0.374717 g_loss = 1.420409

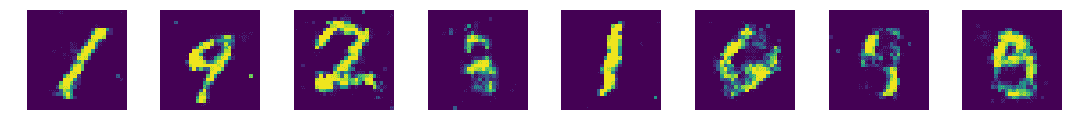

epoch: 0050 d_loss = 0.490554 g_loss = 2.878258

epoch: 0100 d_loss = 0.766314 g_loss = 1.976506

epoch: 0150 d_loss = 0.941174 g_loss = 1.498578

epoch: 0200 d_loss = 1.043525 g_loss = 1.285293

epoch: 0250 d_loss = 1.097761 g_loss = 1.190257

epoch: 0300 d_loss = 1.138826 g_loss = 1.109527

epoch: 0350 d_loss = 1.155886 g_loss = 1.082737

Simple GAN in Keras

import keras

from keras.layers import Dense, Input, LeakyReLU, Dropout

from keras.models import Sequential, Model

tf.reset_default_graph()

keras.backend.clear_session()

g_learning_rate = 0.00001

d_learning_rate = 0.01

n_x = 784

g_n_layers = 3

d_n_layers = 1

g_n_neurons = [256, 512, 1024]

d_n_neurons = [256]

g_model = Sequential()

g_model.add(Dense(units=g_n_neurons[0],

input_shape=(n_z,),

name='g_0'))

g_model.add(LeakyReLU())

for i in range(1,g_n_layers):

g_model.add(Dense(units=g_n_neurons[i],

name='g_{}'.format(i)

))

g_model.add(LeakyReLU())

g_model.add(Dense(units=n_x, activation='tanh',name='g_out'))

print('Generator:')

g_model.summary()

g_model.compile(loss='binary_crossentropy',

optimizer=keras.optimizers.Adam(lr=g_learning_rate)

)

d_model = Sequential()

d_model.add(Dense(units=d_n_neurons[0],

input_shape=(n_x,),

name='d_0'

))

d_model.add(LeakyReLU())

d_model.add(Dropout(0.3))

for i in range(1,d_n_layers):

d_model.add(Dense(units=d_n_neurons[i],

name='d_{}'.format(i)

))

d_model.add(LeakyReLU())

d_model.add(Dropout(0.3))

d_model.add(Dense(units=1, activation='sigmoid',name='d_out'))

print('Discriminator:')

d_model.summary()

d_model.compile(loss='binary_crossentropy',

optimizer=keras.optimizers.SGD(lr=d_learning_rate)

)

d_model.trainable=False

z_in = Input(shape=(n_z,),name='z_in')

x_in = g_model(z_in)

gan_out = d_model(x_in)

gan_model = Model(inputs=z_in,outputs=gan_out,name='gan')

print('GAN:')

gan_model.summary()

gan_model.compile(loss='binary_crossentropy',

optimizer=keras.optimizers.Adam(lr=g_learning_rate)

)

Generator:

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

g_0 (Dense) (None, 256) 65792

_________________________________________________________________

leaky_re_lu_1 (LeakyReLU) (None, 256) 0

_________________________________________________________________

g_1 (Dense) (None, 512) 131584

_________________________________________________________________

leaky_re_lu_2 (LeakyReLU) (None, 512) 0

_________________________________________________________________

g_2 (Dense) (None, 1024) 525312

_________________________________________________________________

leaky_re_lu_3 (LeakyReLU) (None, 1024) 0

_________________________________________________________________

g_out (Dense) (None, 784) 803600

=================================================================

Total params: 1,526,288

Trainable params: 1,526,288

Non-trainable params: 0

_________________________________________________________________

Discriminator:

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

d_0 (Dense) (None, 256) 200960

_________________________________________________________________

leaky_re_lu_4 (LeakyReLU) (None, 256) 0

_________________________________________________________________

dropout_1 (Dropout) (None, 256) 0

_________________________________________________________________

d_out (Dense) (None, 1) 257

=================================================================

Total params: 201,217

Trainable params: 201,217

Non-trainable params: 0

_________________________________________________________________

GAN:

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

z_in (InputLayer) (None, 256) 0

_________________________________________________________________

sequential_1 (Sequential) (None, 784) 1526288

_________________________________________________________________

sequential_2 (Sequential) (None, 1) 201217

=================================================================

Total params: 1,727,505

Trainable params: 1,526,288

Non-trainable params: 201,217

_________________________________________________________________

n_epochs = 400

batch_size = 100

n_batches = int(mnist.train.num_examples / batch_size)

n_epochs_print = 50

for epoch in range(n_epochs+1):

epoch_d_loss = 0.0

epoch_g_loss = 0.0

for batch in range(n_batches):

x_batch, _ = mnist.train.next_batch(batch_size)

x_batch = norm(x_batch)

z_batch = np.random.uniform(-1.0,1.0,size=[batch_size,n_z])

g_batch = g_model.predict(z_batch)

x_in = np.concatenate([x_batch,g_batch])

y_out = np.ones(batch_size*2)

y_out[:batch_size]=0.9

y_out[batch_size:]=0.1

d_model.trainable=True

batch_d_loss = d_model.train_on_batch(x_in,y_out)

z_batch = np.random.uniform(-1.0,1.0,size=[batch_size,n_z])

x_in=z_batch

y_out = np.ones(batch_size)

d_model.trainable=False

batch_g_loss = gan_model.train_on_batch(x_in,y_out)

epoch_d_loss += batch_d_loss

epoch_g_loss += batch_g_loss

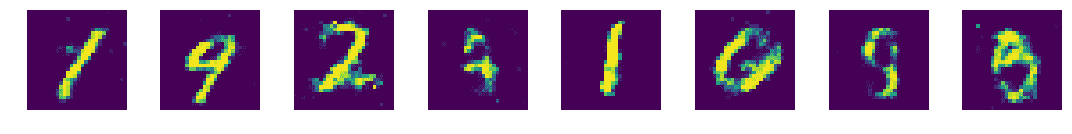

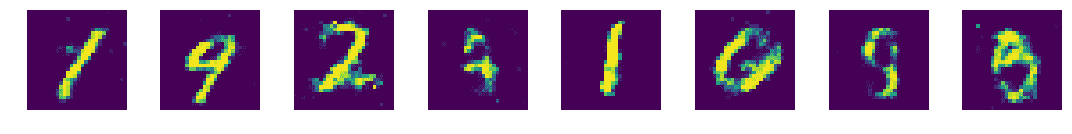

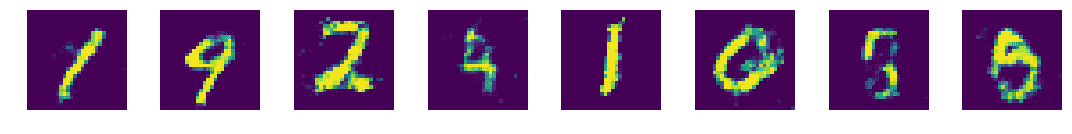

if epoch%n_epochs_print == 0:

average_d_loss = epoch_d_loss / n_batches

average_g_loss = epoch_g_loss / n_batches

print('epoch: {0:04d} d_loss = {1:0.6f} g_loss = {2:0.6f}'

.format(epoch,average_d_loss,average_g_loss))

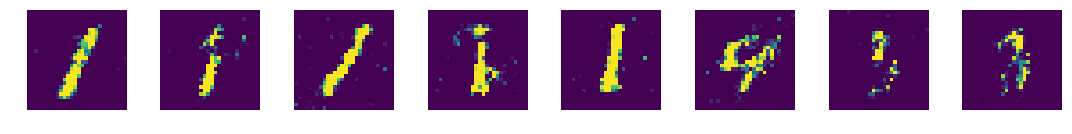

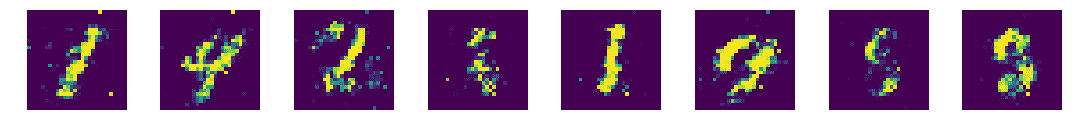

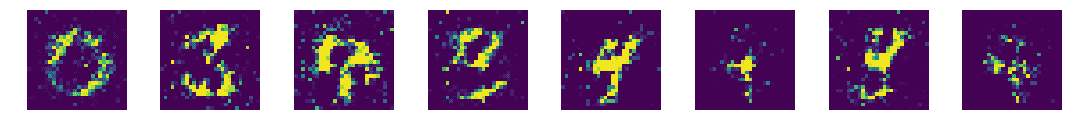

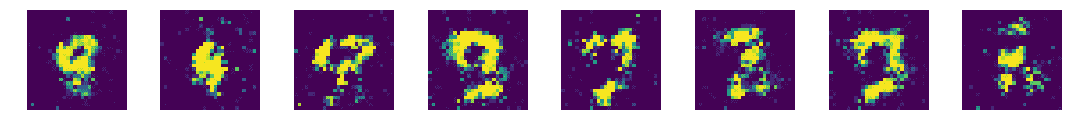

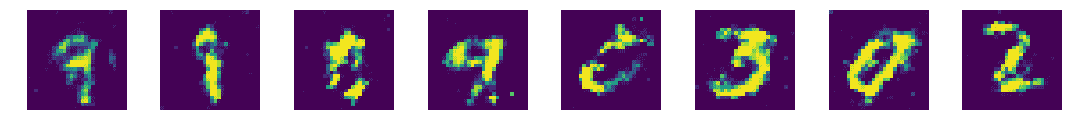

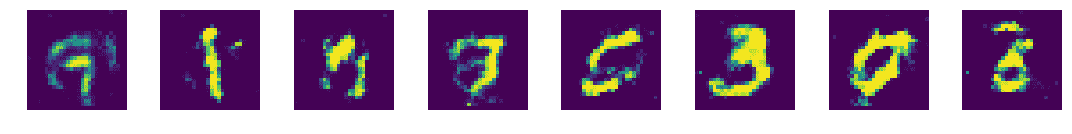

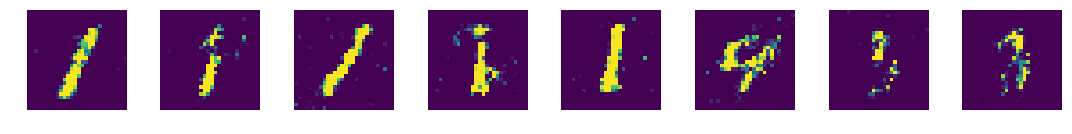

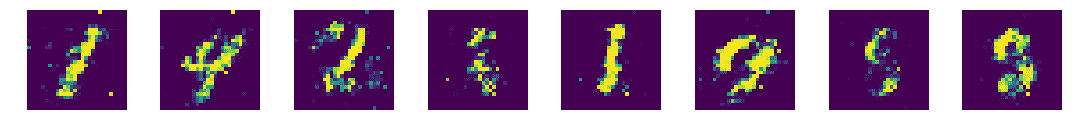

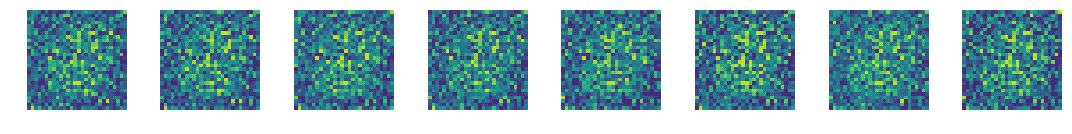

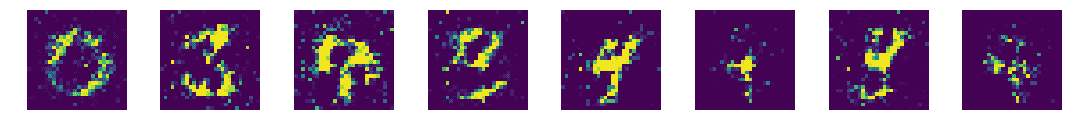

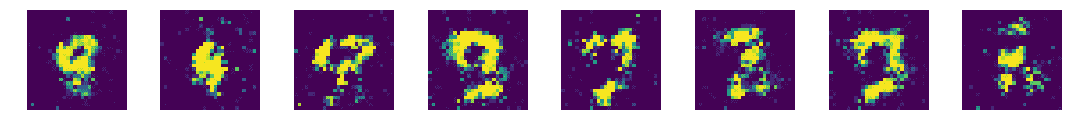

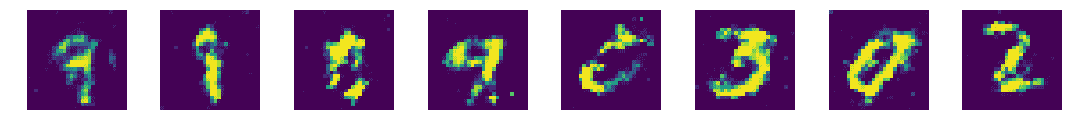

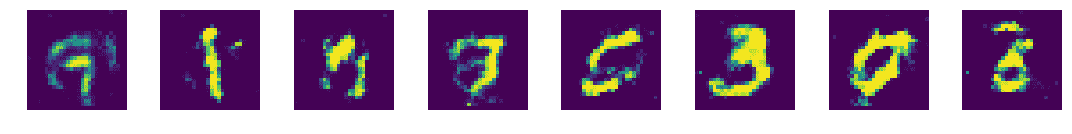

x_pred = g_model.predict(z_test)

display_images(x_pred.reshape(-1,pixel_size,pixel_size))

epoch: 0000 d_loss = 0.488523 g_loss = 0.868583

epoch: 0050 d_loss = 0.483392 g_loss = 1.512116

epoch: 0100 d_loss = 0.538450 g_loss = 1.290401

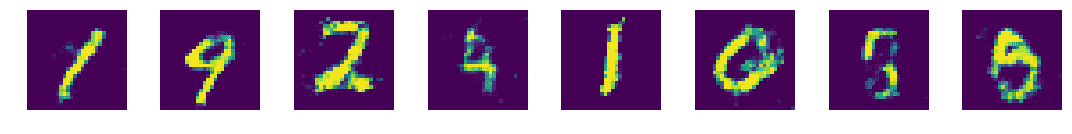

epoch: 0150 d_loss = 0.560499 g_loss = 1.200036

epoch: 0200 d_loss = 0.586289 g_loss = 1.097394

epoch: 0250 d_loss = 0.627239 g_loss = 0.942356

epoch: 0300 d_loss = 0.647910 g_loss = 0.863883

epoch: 0350 d_loss = 0.659564 g_loss = 0.830357