14.2 Generative Adversarial Networks - DCGAN

import math

import os

import numpy as np

np.random.seed(123)

print("NumPy:{}".format(np.__version__))

import pandas as pd

print("Pandas:{}".format(pd.__version__))

import matplotlib as mpl

import matplotlib.pyplot as plt

from matplotlib.pylab import rcParams

rcParams['figure.figsize']=15,10

print("Matplotlib:{}".format(mpl.__version__))

import tensorflow as tf

tf.set_random_seed(123)

print("TensorFlow:{}".format(tf.__version__))

import keras

print("Keras:{}".format(keras.__version__))

NumPy:1.13.1

Pandas:0.21.0

Matplotlib:2.1.0

TensorFlow:1.4.0

Using TensorFlow backend.

Keras:2.0.9

DATASETSLIB_HOME = '../datasetslib'

import sys

if not DATASETSLIB_HOME in sys.path:

sys.path.append(DATASETSLIB_HOME)

%reload_ext autoreload

%autoreload 2

import datasetslib

from datasetslib import util as dsu

datasetslib.datasets_root = os.path.join(os.path.expanduser('~'),'datasets')

Get the MNIST data

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets(os.path.join(datasetslib.datasets_root,'mnist'), one_hot=False)

x_train = mnist.train.images

x_test = mnist.test.images

y_train = mnist.train.labels

y_test = mnist.test.labels

pixel_size = 28

def norm(x):

return (x-0.5)/0.5

Extracting /home/armando/datasets/mnist/train-images-idx3-ubyte.gz

Extracting /home/armando/datasets/mnist/train-labels-idx1-ubyte.gz

Extracting /home/armando/datasets/mnist/t10k-images-idx3-ubyte.gz

Extracting /home/armando/datasets/mnist/t10k-labels-idx1-ubyte.gz

n_z = 256

z_test = np.random.uniform(-1.0,1.0,size=[8,n_z])

def display_images(images):

for i in range(images.shape[0]):

plt.subplot(1, 8, i + 1)

plt.imshow(images[i])

plt.axis('off')

plt.tight_layout()

plt.show()

DCGAN in Keras

import keras

from keras.layers import Dense, Input, LeakyReLU, Activation

from keras.layers import UpSampling2D, Conv2D, Reshape, Flatten, MaxPooling2D

from keras.models import Sequential, Model

tf.reset_default_graph()

keras.backend.clear_session()

g_learning_rate = 0.00001

d_learning_rate = 0.01

n_x = 784

g_n_layers = 3

d_n_layers = 1

g_n_filters = [64,32,16]

d_n_filters = [64]

n_width=28

n_height=28

n_depth=1

g_model = Sequential(name='g')

g_model.add(Dense(units=5*5*128,

input_shape=(n_z,),

name='g_in'

))

g_model.add(Activation('tanh',name='g_in_act'))

g_model.add(Reshape(target_shape=(5,5,128),

input_shape=(5*5*128,),

name='g_in_reshape'

)

)

for i in range(0,g_n_layers):

g_model.add(UpSampling2D(size=[2,2],

name='g_{}_up2d'.format(i)

))

g_model.add(Conv2D(filters=g_n_filters[i],

kernel_size=(5,5),

padding='same',

name='g_{}_conv2d'.format(i)

))

g_model.add(Activation('tanh',name='g_{}_act'.format(i)))

g_model.add(Flatten(name='g_out_flatten'))

g_model.add(Dense(units=n_x, activation='tanh',name='g_out'))

print('Generator:')

g_model.summary()

g_model.compile(loss='binary_crossentropy',

optimizer=keras.optimizers.Adam(lr=g_learning_rate)

)

d_model = Sequential(name='d')

d_model.add(Reshape(target_shape=(n_width,n_height,n_depth),

input_shape=(n_x,),

name='d_0_reshape'

)

)

for i in range(0,d_n_layers):

d_model.add(Conv2D(filters=d_n_filters[i],

kernel_size=(5,5),

padding='same',

name='d_{}_conv2d'.format(i)

)

)

d_model.add(Activation('tanh',name='d_{}_act'.format(i)))

d_model.add(MaxPooling2D(pool_size=(2,2),

strides=(2,2),

name='d_{}_maxpool'.format(i)

)

)

d_model.add(Flatten(name='d_out_flatten'))

d_model.add(Dense(units=1, activation='sigmoid',name='d_out'))

print('Discriminator:')

d_model.summary()

d_model.compile(loss='binary_crossentropy',

optimizer=keras.optimizers.SGD(lr=d_learning_rate)

)

d_model.trainable=False

z_in = Input(shape=(n_z,),name='z_in')

x_in = g_model(z_in)

gan_out = d_model(x_in)

gan_model = Model(inputs=z_in,outputs=gan_out,name='gan')

print('GAN:')

gan_model.summary()

gan_model.compile(loss='binary_crossentropy',

optimizer=keras.optimizers.Adam(lr=g_learning_rate)

)

Generator:

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

g_in (Dense) (None, 3200) 822400

_________________________________________________________________

g_in_act (Activation) (None, 3200) 0

_________________________________________________________________

g_in_reshape (Reshape) (None, 5, 5, 128) 0

_________________________________________________________________

g_0_up2d (UpSampling2D) (None, 10, 10, 128) 0

_________________________________________________________________

g_0_conv2d (Conv2D) (None, 10, 10, 64) 204864

_________________________________________________________________

g_0_act (Activation) (None, 10, 10, 64) 0

_________________________________________________________________

g_1_up2d (UpSampling2D) (None, 20, 20, 64) 0

_________________________________________________________________

g_1_conv2d (Conv2D) (None, 20, 20, 32) 51232

_________________________________________________________________

g_1_act (Activation) (None, 20, 20, 32) 0

_________________________________________________________________

g_2_up2d (UpSampling2D) (None, 40, 40, 32) 0

_________________________________________________________________

g_2_conv2d (Conv2D) (None, 40, 40, 16) 12816

_________________________________________________________________

g_2_act (Activation) (None, 40, 40, 16) 0

_________________________________________________________________

g_out_flatten (Flatten) (None, 25600) 0

_________________________________________________________________

g_out (Dense) (None, 784) 20071184

=================================================================

Total params: 21,162,496

Trainable params: 21,162,496

Non-trainable params: 0

_________________________________________________________________

Discriminator:

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

d_0_reshape (Reshape) (None, 28, 28, 1) 0

_________________________________________________________________

d_0_conv2d (Conv2D) (None, 28, 28, 64) 1664

_________________________________________________________________

d_0_act (Activation) (None, 28, 28, 64) 0

_________________________________________________________________

d_0_maxpool (MaxPooling2D) (None, 14, 14, 64) 0

_________________________________________________________________

d_out_flatten (Flatten) (None, 12544) 0

_________________________________________________________________

d_out (Dense) (None, 1) 12545

=================================================================

Total params: 14,209

Trainable params: 14,209

Non-trainable params: 0

_________________________________________________________________

GAN:

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

z_in (InputLayer) (None, 256) 0

_________________________________________________________________

g (Sequential) (None, 784) 21162496

_________________________________________________________________

d (Sequential) (None, 1) 14209

=================================================================

Total params: 21,176,705

Trainable params: 21,162,496

Non-trainable params: 14,209

_________________________________________________________________

n_epochs = 400

batch_size = 100

n_batches = int(mnist.train.num_examples / batch_size)

n_epochs_print = 50

for epoch in range(n_epochs+1):

epoch_d_loss = 0.0

epoch_g_loss = 0.0

for batch in range(n_batches):

x_batch, _ = mnist.train.next_batch(batch_size)

x_batch = norm(x_batch)

z_batch = np.random.uniform(-1.0,1.0,size=[batch_size,n_z])

g_batch = g_model.predict(z_batch)

x_in = np.concatenate([x_batch,g_batch])

y_out = np.ones(batch_size*2)

y_out[:batch_size]=0.9

y_out[batch_size:]=0.1

d_model.trainable=True

batch_d_loss = d_model.train_on_batch(x_in,y_out)

z_batch = np.random.uniform(-1.0,1.0,size=[batch_size,n_z])

x_in=z_batch

y_out = np.ones(batch_size)

d_model.trainable=False

batch_g_loss = gan_model.train_on_batch(x_in,y_out)

epoch_d_loss += batch_d_loss

epoch_g_loss += batch_g_loss

if epoch%n_epochs_print == 0:

average_d_loss = epoch_d_loss / n_batches

average_g_loss = epoch_g_loss / n_batches

print('epoch: {0:04d} d_loss = {1:0.6f} g_loss = {2:0.6f}'

.format(epoch,average_d_loss,average_g_loss))

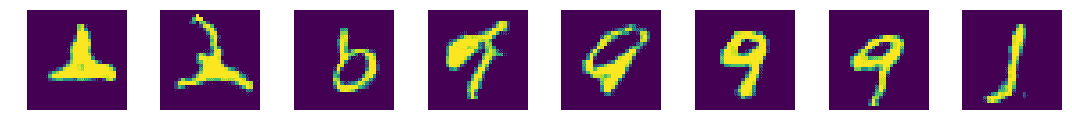

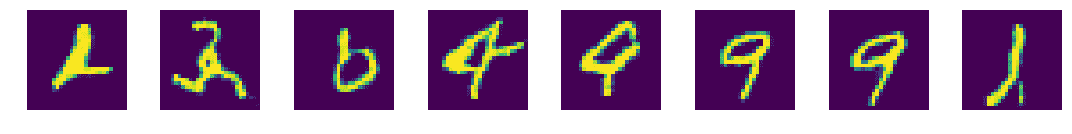

x_pred = g_model.predict(z_test)

display_images(x_pred.reshape(-1,pixel_size,pixel_size))

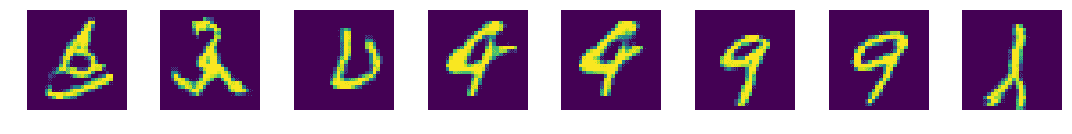

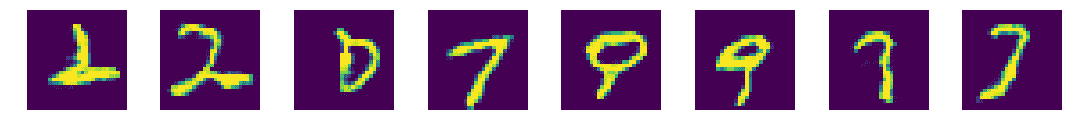

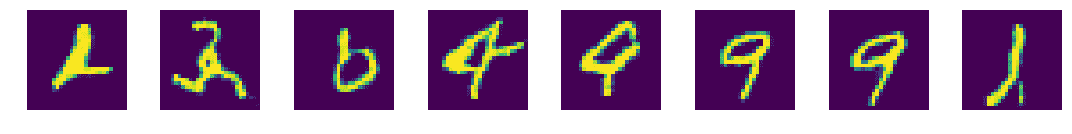

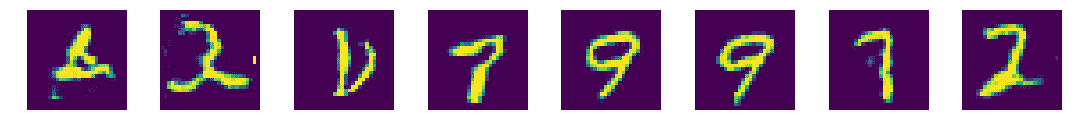

epoch: 0000 d_loss = 0.529010 g_loss = 1.180989

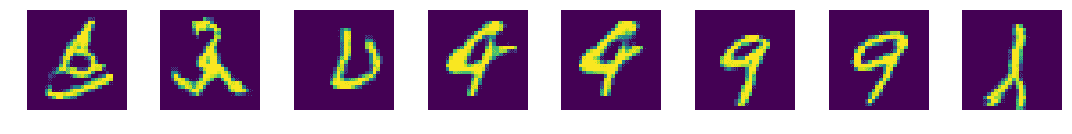

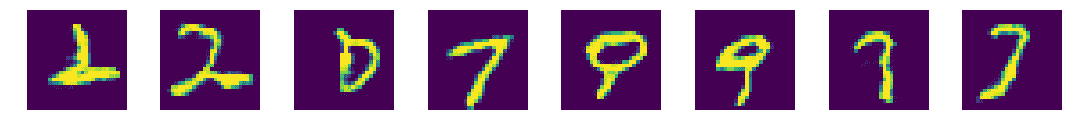

epoch: 0050 d_loss = 0.708614 g_loss = 0.753180

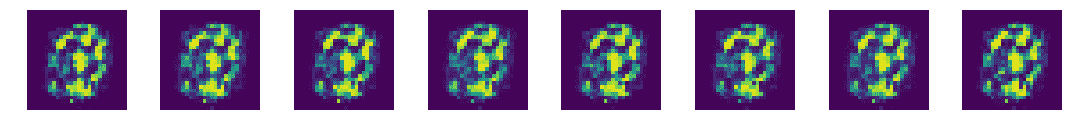

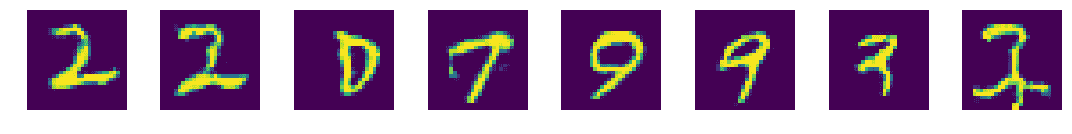

epoch: 0100 d_loss = 0.703850 g_loss = 0.693080

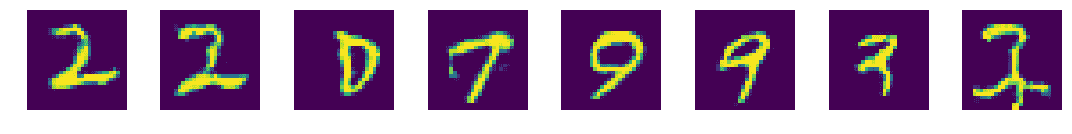

epoch: 0150 d_loss = 0.696364 g_loss = 0.698857

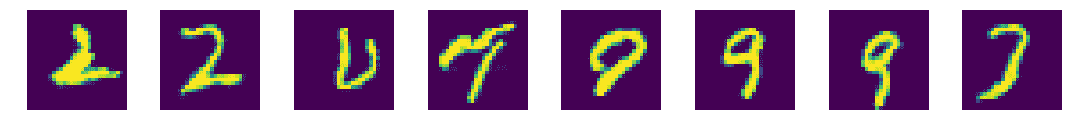

epoch: 0200 d_loss = 0.694226 g_loss = 0.700982

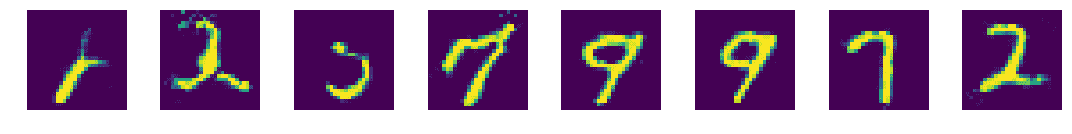

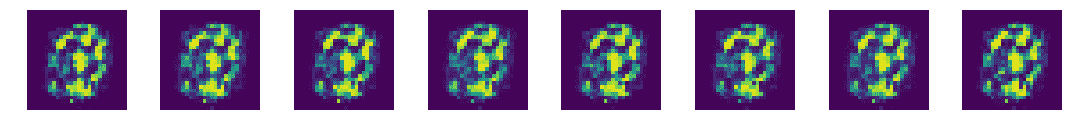

epoch: 0250 d_loss = 0.694548 g_loss = 0.699856

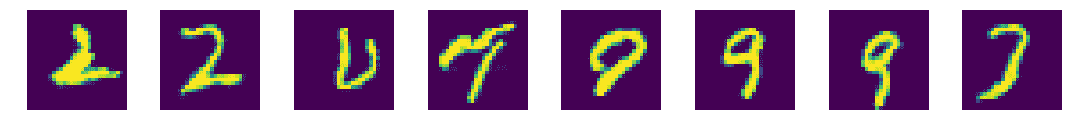

epoch: 0300 d_loss = 0.694598 g_loss = 0.697125

epoch: 0350 d_loss = 0.694443 g_loss = 0.695927

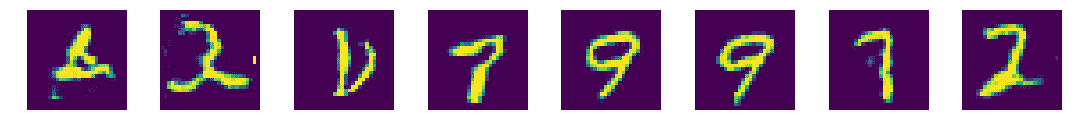

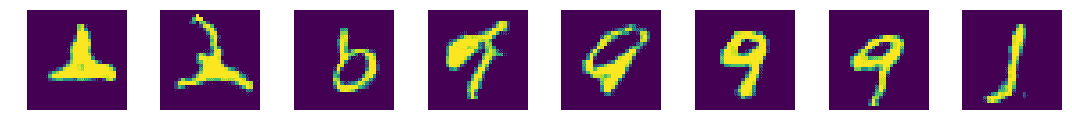

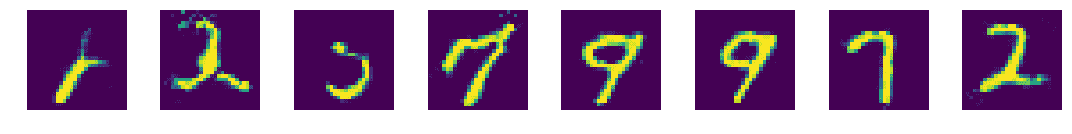

epoch: 0400 d_loss = 0.694062 g_loss = 0.695756