Visualizing CNN + Summary

import numpy as np

import tensorflow as tf

import matplotlib.pyplot as plt

from tensorflow.examples.tutorials.mnist import input_data

%matplotlib inline

mnist = input_data.read_data_sets('data/', one_hot=True)

trainimg = mnist.train.images

trainlabel = mnist.train.labels

testimg = mnist.test.images

testlabel = mnist.test.labels

print ("Packages loaded.")

Extracting data/train-images-idx3-ubyte.gz

Extracting data/train-labels-idx1-ubyte.gz

Extracting data/t10k-images-idx3-ubyte.gz

Extracting data/t10k-labels-idx1-ubyte.gz

Packages loaded.

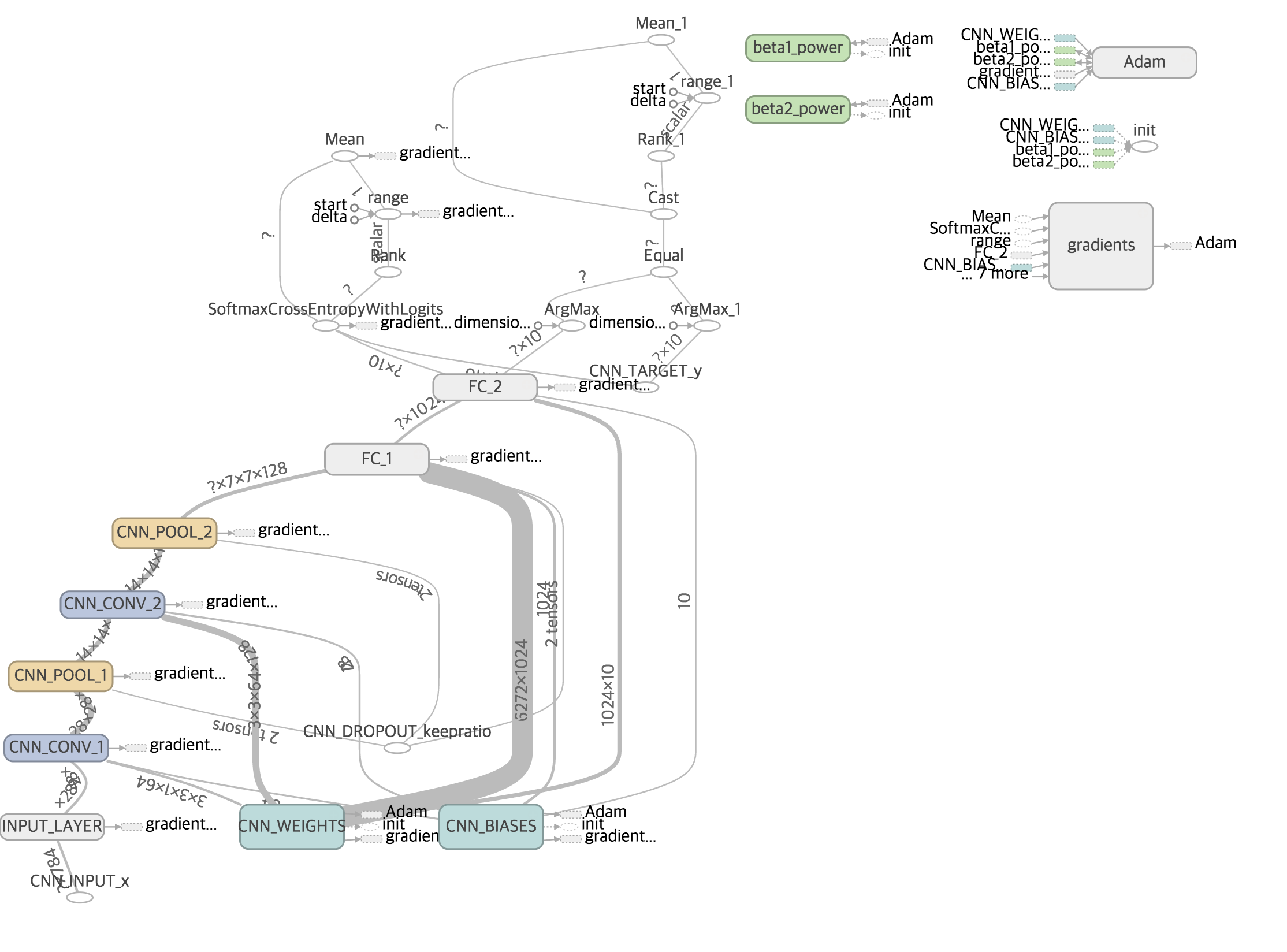

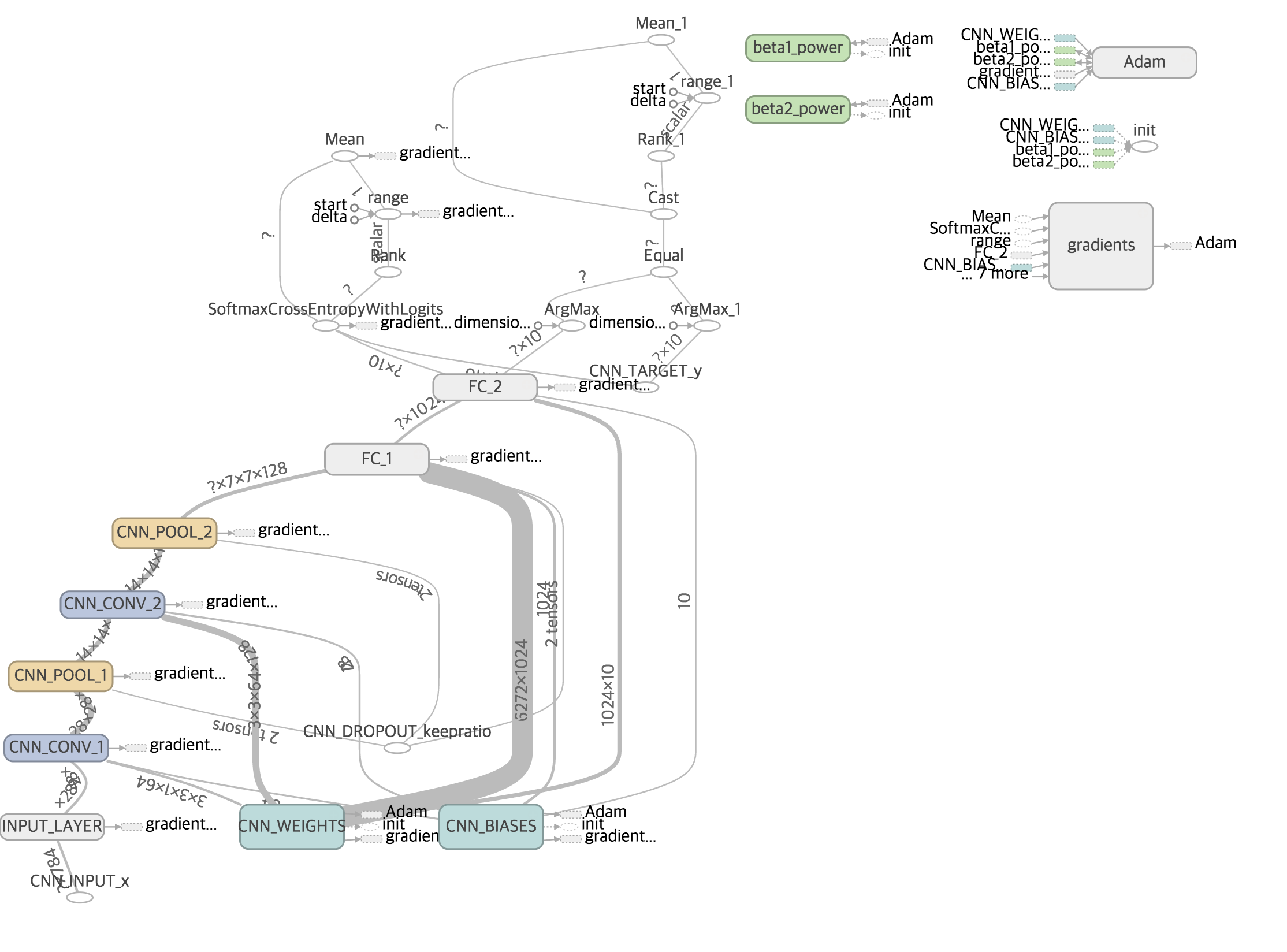

Define Networks

tf.variable_scope("NAME"):

learning_rate = 0.001

training_epochs = 5

batch_size = 100

display_step = 1

n_input = 784

n_output = 10

with tf.variable_scope("CNN_WEIGHTS"):

weights = {

'wc1': tf.Variable(tf.random_normal([3, 3, 1, 64], stddev=0.1)),

'wc2': tf.Variable(tf.random_normal([3, 3, 64, 128], stddev=0.1)),

'wd1': tf.Variable(tf.random_normal([7*7*128, 1024], stddev=0.1)),

'wd2': tf.Variable(tf.random_normal([1024, n_output], stddev=0.1))

}

with tf.variable_scope("CNN_BIASES"):

biases = {

'bc1': tf.Variable(tf.random_normal([64], stddev=0.1)),

'bc2': tf.Variable(tf.random_normal([128], stddev=0.1)),

'bd1': tf.Variable(tf.random_normal([1024], stddev=0.1)),

'bd2': tf.Variable(tf.random_normal([n_output], stddev=0.1))

}

def conv_basic(_input, _w, _b, _keepratio):

with tf.variable_scope("INPUT_LAYER"):

_input_r = tf.reshape(_input, shape=[-1, 28, 28, 1])

with tf.variable_scope("CNN_CONV_1"):

_conv1 = tf.nn.relu(tf.nn.bias_add(tf.nn.conv2d(_input_r, _w['wc1']

, strides=[1, 1, 1, 1], padding='SAME'), _b['bc1']))

with tf.variable_scope("CNN_POOL_1"):

_pool1 = tf.nn.max_pool(_conv1, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1]

, padding='SAME')

_pool_dr1 = tf.nn.dropout(_pool1, _keepratio)

with tf.variable_scope("CNN_CONV_2"):

_conv2 = tf.nn.relu(tf.nn.bias_add(tf.nn.conv2d(_pool_dr1, _w['wc2']

, strides=[1, 1, 1, 1], padding='SAME'), _b['bc2']))

with tf.variable_scope("CNN_POOL_2"):

_pool2 = tf.nn.max_pool(_conv2, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1]

, padding='SAME')

_pool_dr2 = tf.nn.dropout(_pool2, _keepratio)

with tf.variable_scope("FC_1"):

_dense1 = tf.reshape(_pool_dr2, [-1, _w['wd1'].get_shape().as_list()[0]])

_fc1 = tf.nn.relu(tf.nn.bias_add(tf.matmul(_dense1, _w['wd1']), _b['bd1']))

_fc_dr1 = tf.nn.dropout(_fc1, _keepratio)

with tf.variable_scope("FC_2"):

_out = tf.add(tf.matmul(_fc_dr1, _w['wd2']), _b['bd2'])

out = {

'input_r': _input_r, 'conv1': _conv1, 'pool1': _pool1, 'pool1_dr1': _pool_dr1,

'conv2': _conv2, 'pool2': _pool2, 'pool_dr2': _pool_dr2, 'dense1': _dense1,

'fc1': _fc1, 'fc_dr1': _fc_dr1, 'out': _out }

return out

print ("Network ready")

Network ready

Define functions

x = tf.placeholder(tf.float32, [None, n_input], name="CNN_INPUT_x")

y = tf.placeholder(tf.float32, [None, n_output], name="CNN_TARGET_y")

keepratio = tf.placeholder(tf.float32, name="CNN_DROPOUT_keepratio")

pred = conv_basic(x, weights, biases, keepratio)['out']

cost = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(pred, y))

optm = tf.train.AdamOptimizer(learning_rate=learning_rate).minimize(cost)

corr = tf.equal(tf.argmax(pred,1), tf.argmax(y,1))

accr = tf.reduce_mean(tf.cast(corr, tf.float32))

init = tf.initialize_all_variables()

print ("Functions ready")

Functions ready

Summary

sess = tf.Session()

sess.run(init)

tf.scalar_summary('cross entropy', cost)

tf.scalar_summary('accuracy', accr)

merged = tf.merge_all_summaries()

summary_writer = tf.train.SummaryWriter('/tmp/tf_logs/cnn_mnist'

, graph=sess.graph)

print ("Summary ready")

Summary ready

Run

print ("Start!")

for epoch in range(training_epochs):

avg_cost = 0.

total_batch = int(mnist.train.num_examples/batch_size)

for i in range(total_batch):

batch_xs, batch_ys = mnist.train.next_batch(batch_size)

summary, _ = sess.run([merged, optm]

, feed_dict={x: batch_xs, y: batch_ys, keepratio:0.7})

avg_cost += sess.run(cost

, feed_dict={x: batch_xs, y: batch_ys, keepratio:1.})/total_batch

summary_writer.add_summary(summary, epoch*total_batch+i)

if epoch % display_step == 0:

print ("Epoch: %03d/%03d cost: %.9f" % (epoch, training_epochs, avg_cost))

train_acc = sess.run(accr, feed_dict={x: batch_xs, y: batch_ys, keepratio:1.})

print (" Training accuracy: %.3f" % (train_acc))

test_acc = sess.run(accr, feed_dict={x: testimg, y: testlabel, keepratio:1.})

print (" Test accuracy: %.3f" % (test_acc))

print ("Optimization Finished.")

Start!

Epoch: 000/005 cost: 0.352900247

Training accuracy: 0.990

Test accuracy: 0.981

Epoch: 001/005 cost: 0.047190106

Training accuracy: 0.980

Test accuracy: 0.988

Epoch: 002/005 cost: 0.031958400

Training accuracy: 1.000

Test accuracy: 0.990

Epoch: 003/005 cost: 0.022927662

Training accuracy: 1.000

Test accuracy: 0.990

Epoch: 004/005 cost: 0.018374201

Training accuracy: 0.990

Test accuracy: 0.991

Optimization Finished.

Run the command line

tensorboard --logdir=/tmp/tf_logs/cnn_mnist